Neural Bases of Visual Plasticity

One dramatic example of visual neuroplasticity occurs when the visual environment suddenly changes, for example when we put on colored glasses. In such cases, visual neurons alter their firing properties in order to keep us seeing colors accurately. Critically, the longer vision experiences an environment, the stronger and longer lasting this adaptation becomes. This suggests that long-term exposure to altered visual input may produce long-lasting changes in cortex. The neural bases of such long-lasting adaptation remains unknown, however. Current work in the lab is attempting to measure which parts of the brain are affected by long-term adaptation and more importantly, how the properties of neurons in those regions changed, using fMRI and EEG.

We are also interested in how the brain learns to shift seamlessly between adaptive states with practice. For example, experience wearing red glasses allows the brain to compensate more and more rapidly for the changes in color the glasses produce, and eventually colors appear almost completely normal as soon as the glasses are put on. What mechanisms produce such "mode switches", and how are they learned?

Visual Plasticity Using Altered Reality

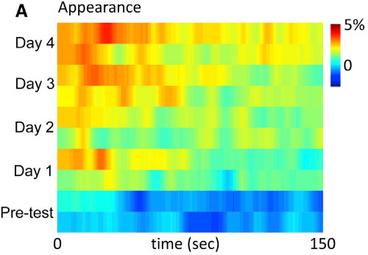

We also use novel "altered reality" technology, in which images from a camera are processed in realtime and shown on a head-mounted display. This has allowed us to place participants in novel visual environments to adapt for long duration. In one study, for example, subjects viewed a world missing vertical orientations for 4 days consecutively.

This work is testing just how strong and long-lasting the plasticity produced by adaptation can become. We are also uncovering factors that limit adaptation, and testing paradigms to make its effects larger and more permanent, in order to aid development of therapies for disease.

Binocular Rivalry

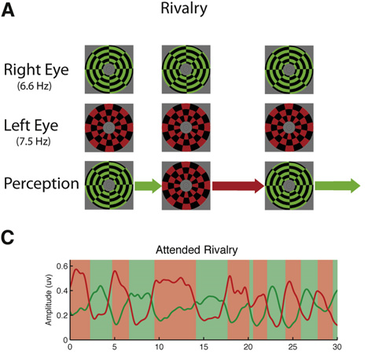

The images that reach our eyes are inherently ambiguous. In many cases, multiple interpretations are possible (for example the "face vase" image), and the visual system must suppress one of them. We are particularly interested understanding binocular rivalry: When two incompatible images are presented, one to each eye, perception alternates between them. This is a powerful model system for studying how information can be suppressed from awareness, and projects in the lab study when and how it arises, and its neural bases. One line of research investigates the role of attention in producing suppression, examining how potentially conflicting neural signals interact both when attention is present and when it is withdrawn. We use fMRI, EEG, and simultaneously acquired EEG and fMRI to measure cortical signals during binocular rivalry.

Clinical Applications

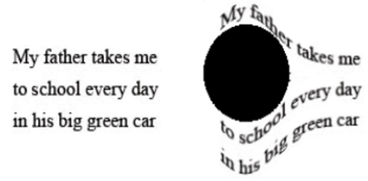

The research in the lab has implications for disorders of the visual system. We are currently using VR technology to "remap" the visual field of patients with age-related macular degeneration, moving image content away from their blind spots.

We are also applying what we have learned about adaptation to patients with a little-known but surprisingly common condition called "visual snow", in which the world appears covered in tiny flickering specks. Adapting to high contrast patterns has allowed some participants with visual snow to experience, at least for a short while, the world without visual snow for the first time.